Cockpit AI Agent: Autonomous scenario creation becomes the first step to personalize cockpits

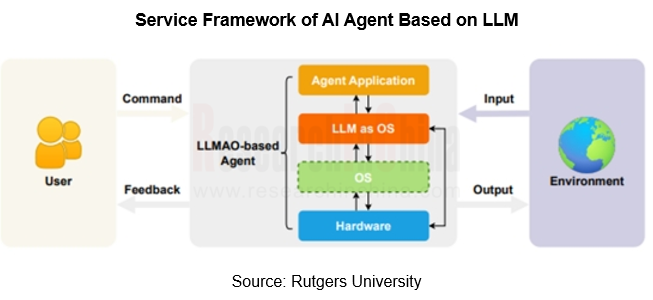

In AI Foundation Models’ Impacts on Vehicle Intelligent Design and Development Research Report, 2024, ResearchInChina mentioned that the core of an AI Agent uses a large language model (LLM) as its core computing engine (LLM OS). In the AI service framework, the LLM acts as AI core and the Agent acts as AI APP.? With the help of reasoning and generation capabilities of? AI foundation model, the Agent can create more cockpit scenarios, while further improving current multimodal interaction, voice processing and other technologies in the cockpit.

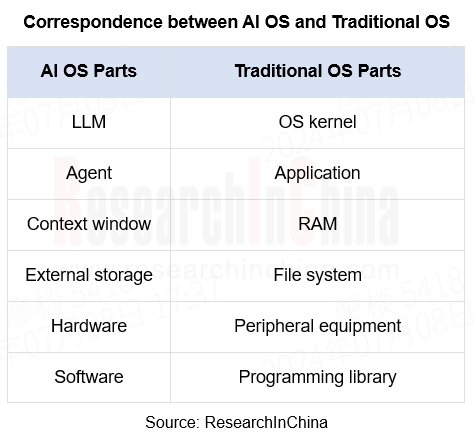

Compared with the traditional OS, AI OS is programmed in natural language, which is more suitable for human operation and convenient for customization and personalized human-computer interaction. The Agent responds to user prompts or instructions in natural language, and can perform a lot of tasks according to its interaction with physical or digital environment.

Foundation models spawn Agent customized scenarios

Since AI foundation models were installed in cars, they have been widely used in multimodal interaction scenarios. OEMs have started from voice assistants to further improve? intelligent processing of user commands:

Before foundation models got on cars, the wake-up rate and effective instructions of voice assistants in cockpits are at a low level;

After foundation models were available in cars, not only has voice recognition become more intelligent, but the application of expression monitoring and gesture control have also been improved, with effective commands and daily active rates increasing significantly.

AI foundation models further enhance the user experience of smart cockpits through strong context processing and reasoning capabilities. Please refer to the following dialogue:

(Mobility scenario)

--**Tongxue! (the name of a voice assistant)

-I am here!

-There seems to be a prairie ahead. Please take a picture for me!

-Wait a moment, and I will capture the beautiful scenariory of the prairie for you. I'll let you know when the photo is taken. Is there anything else I can help you with?

-Send it to Moments immediately. I want my friends to have a look at this beautiful scenariory.

-Ok, according to your authorized account, please choose the material and filter style.

-Just this one, with a caption.

-Ok, three captions have been generated for you. Which one do you like?

-Just this one.

(You enter the office and receive a phone call)

-There is a phone call from a customer, and I have transferred it to the IVI for you.? Would you like to answer it?

-Yes, and turn on the office mode.

-Ok, I will generate an avatar of the customer for you and start the conversation. Do you have any questions or things to discuss? I will record the conversation and provide you with the minutes of the meeting after it is over.

(The avatar is generated)

-Now you can start the conversation.

The above scenarios will not be materialized in the cockpit until 2024 when foundation models are installed on vehicles by some OEMs.

For example, IM L6 has built Carlog and City Drive scenarios to enable the AI foundation models to proactively recommend food and attractions and allow users to post them on social media:

Carlog: Actively perceive the scenario during driving through AI vision foundation model, mobilize four cameras to take photos, automatically save and edit them, and support one-click share in Moments.

City Drive: Cooperate with Volcengine to model nearby food, scenic spots and landmarks in real time in the digital screen, and push them according to users' habits and preferences.

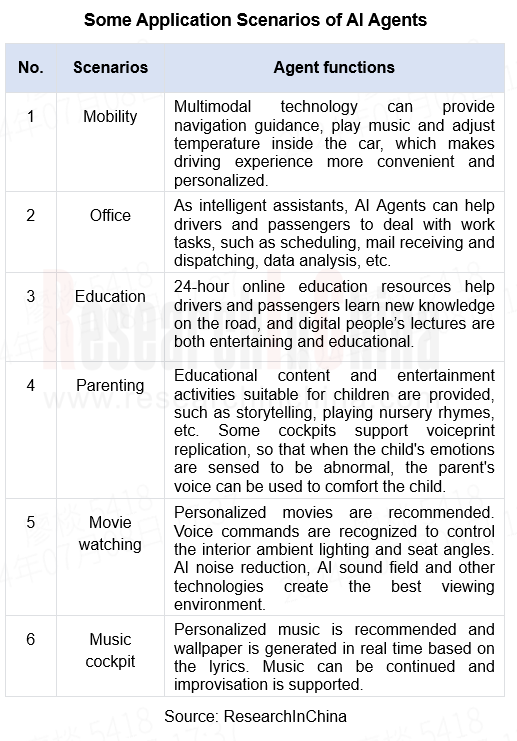

The applicability of foundation models in various scenarios has stimulated users' demand for intelligent agents that can uniformly manage cockpit functions. In 2024, OEMs such as NIO, Li Auto, and Hozon successively launched Agent frameworks, using voice assistants as the starting point to manage functions and applications in cockpits.

Agent service frameworks can not only manage cockpit functions in a unified way, but also provide more abundant scenario modes according to customers' needs and preferences, especially supporting customized scenarios for users, which accelerates the advent of the cockpit personalization era.

For example, NIO’s NOMI GPT allows users to set an AI scenario with just one sentence:

Core competence of cockpit Agents

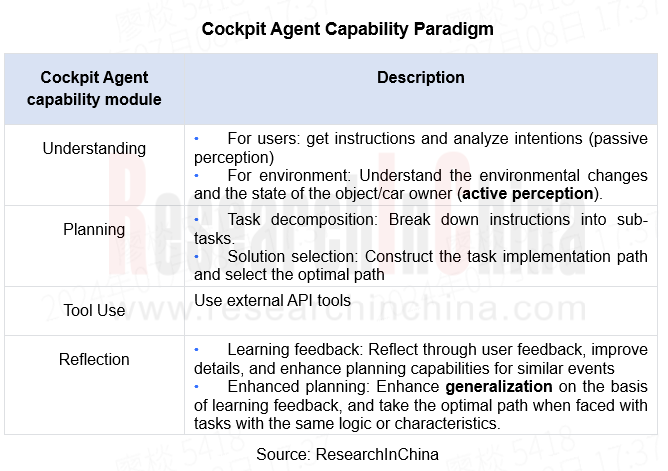

AI Agents in the era of foundation models are based on LLMs, whose powerful reasoning expands the applicable scenarios of AI Agents that can improve the thinking capability of foundation models through feedback obtained during operation. In the cockpit, the Agent capability paradigm can be roughly divided into "Understanding" + "Planning" + "Tool Use" + "Reflection".

When Agents first get on cars, cognitive and planning abilities are more important. The understanding of task goals and the choice of implementation paths directly determine the accuracy of performance results, which in turn affect the scenario utilization rate of Agents.

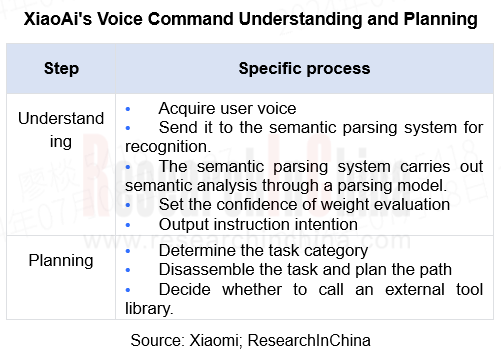

For example, in Xiaomi's voice interaction process, semantic understanding is the difficulty of the entire automotive voice processing process. XiaoAi handles semantic parsing through a semantic parsing model.

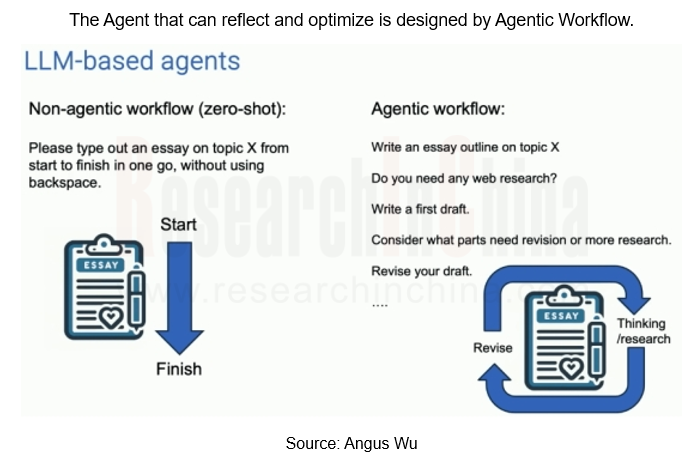

After the mass production of Agents, the personalized cockpits that support users to customize scenario modes become the highlight, and Reflection becomes the most important core competence at this stage, so it is necessary to build an Agentic Workflow that is constantly learning and optimizing.

For example, Lixiang Tongxue offered by Li Auto supports the creation of one-sentence scenarios. It is backed by Mind GPT's built-in memory network and online reinforcement learning capabilities. Mind GPT can remember personalized preferences and habits based on historical conversations. When similar scenarios recur, it can automatically set scenario parameters through historical data to fit the user's original intentions.

At the AI OS architecture setting level, we take SAIC Z-One as an example:

Z-One accesses the LLM kernel (LLM OS) at the kernel layer, which controls the interfaces of AI OS SDK and ASF with the original microkernel respectively, in which AI OS SDK receives the scheduling of the LLM to promote the Agent service framework of the application layer. The Z-One AI OS architecture highly integrates AI with CPU. Through SOA atomic services, AI is then connected to the vehicle's sensors, actuators and controllers. This architecture, based on a terminal-cloud foundation model, can enhance the computing power of the terminal-side foundation model and reduce operational latency.

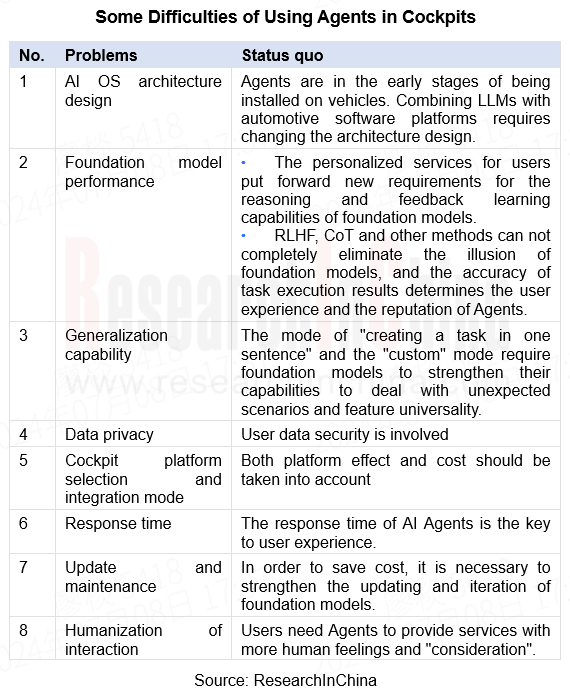

Application Difficulty of Cockpit AI Agents

Agents connect to users and execute commands. In the application process, in addition to the technical difficulties of putting foundation models on cars, they also face scenario difficulties. In the process of command reception-semantic analysis-intention reasoning-task execution, the accuracy of the performance results and the delay in human-computer interaction directly affect the user's riding experience.

Humanization of interaction

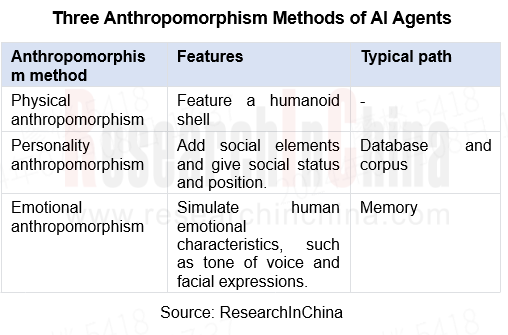

For example, in the "emotional consultant" scenario, Agents should resonate emotionally with car owners and perform anthropomorphism. Generally, there are three forms of anthropomorphism of AI Agents: physical anthropomorphism, personality anthropomorphism, and emotional anthropomorphism.

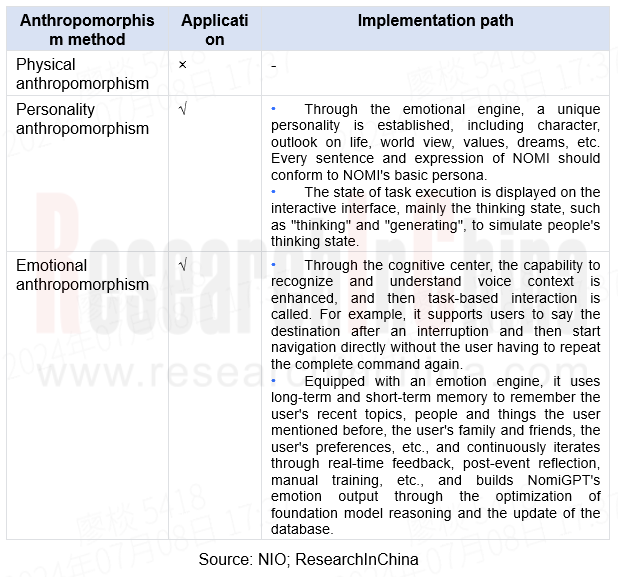

NIO's NOMI GPT uses "personality anthropomorphism" and "emotional anthropomorphism":

Foundation model performance

In the "encyclopedia question and answer" scenario, Agents may be unable to answer the user's questions, especially open questions, accurately because of LLM illusion after semantic analysis, database search, answer generation and the like.

Current solutions include advanced prompting, RAG+knowledge graph, ReAct, CoT/ToT, etc., which cannot completely eliminate “LLM illusion”. In the cockpit, external databases, RAG, self-consistency and other methods are more often used to reduce the frequency of “LLM illusion”.

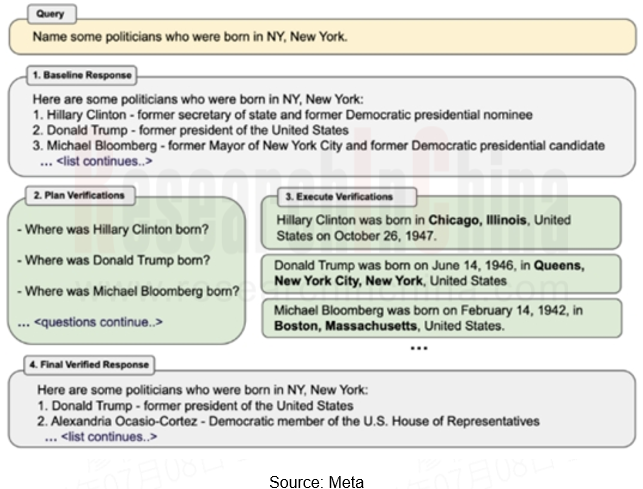

Some foundation model manufacturers have improved the above solutions. For example, Meta has proposed to reduce “LLM illusion” through Chain-of-Verification (CoVe). This method breaks down fact-checking into more detailed sub-questions to improve response accuracy and is consistent with the human-driven fact-checking process. It can effectively improve the FACTSCORE indicator in long-form generation tasks.

CoVe includes four steps: query, plan verification, execute verification and? final verified response.

Automotive Digital Key Industry Trend Report, 2026

Digital Key Research: Automotive BLE, UWB and SLE Hardware Layout

The Automotive Digital Key Industry Trend Report, 2026, released by ResearchInChina, analyzes and predicts the digital key market, co...

Monthly Report on Automotive New Technology (May 2026)

UHD gaze technology, full-color LiDAR, UWB, etc. promote the upgrade of intelligent driving perception capabilities

This report is published once a month and is available for annual subscription.The...

In-Cabin Monitoring Systems (DMS, OMS, etc.) Research Report, 2026

In-Cabin Monitoring System Research: DMS to Become Mandatory in 2027, Expected to be Installed in Over 14 Million Vehicles

ResearchInChina released the In-Cabin Monitoring Systems (DMS, OMS, etc.) Re...

Automotive Service-Oriented Architecture (SOA) and Cross-Domain Middleware Industry Report, 2026

Research on automotive SOA and cross-domain middleware: The era of AI atomic services and AI cross-domain fusion agents is coming.

Automotive SOA evolves towards AI + full SOA servitization Driv...

Automotive Display, Center Console and Cluster Industry Report, 2026

Automotive Display Research: Multi-Screen Application Slows Down, While OLED and MiniLED Are Introduced in Vehicles Quickly

In 2026, automotive displays will no longer excessively pursue the number a...

Global and China Intelligent Vehicle Standard System Construction and Certification Research Report, 2026

Intelligent Driving Standards and Certification: With the Maturing Standardization System, China Will Participate in Formulation of Global Standards

China's automotive industry is transforming from ...

Automotive Intelligent Diagnosis Industry Report, 2026

Automotive Intelligent Diagnosis Research: Powered by AI, Remote Diagnosis Is Being Upgraded towards Intelligence.

ResearchInChina released the Automotive Intelligent Diagnosis Industry Report, 2026....

Automotive Cloud Service Platform Research Report, 2026

Research on automotive cloud service platform: with architecture upgrade and computing power improvement, cloud services enter a new stage

In 2026, the Internet of Vehicles industry generates petaby...

Integrated Battery and Innovative Battery Technology Research Report, 2026

Power Battery Research: Sales of High-Capacity Vehicles Keep Rising, and Solid-State Batteries Begin to Be Installed in Vehicles

I. Sales of High-Capacity Vehicles Sustain Growth, and Those with A C...

Chinese Independent OEMs’ ADAS and Autonomous Driving Report, 2026

Research on OEMs' Intelligent Driving: Era of Physical AI, Standard Configuration of D2D, and Initial Exploration of L3 Commercial Pilot Projects

From 2023 to 2025, the intelligent driving installati...

Intelligent Vehicle New Technology Application Analysis Report, 2025-2026

New Technology Research: Innovative Products such as Bionic Cameras, Vision-LiDAR Fusion Sensors, Auditory Sensors Further Enhance Vehicle Perception Capabilities

ForewordResearchInChina released th...

Automotive Optical Fiber Communication (Optical Fiber Ethernet, PON) and Supply Chain Research Report, 2026

Research on Automotive Optical Fiber Communication: Introduction of Optical Fiber in Vehicles Accelerates, with Priority Deployment in High-Speed Communication Link (10+Gbps) Scenarios

Automotive opt...

Automotive Intelligent Cockpit SoC Research Report, 2026

Automotive Cockpit SoC Research: Passenger Cars in the Price Range of RMB100,000–200,000 Account for Nearly 50% of Total Sales, and New-Generation Cockpit SoC Products Largely Enter Mass Production

P...

LiDAR (Automotive, Pan-Robotics, etc.) Application Research Report, 2025-2026

LiDAR research: hardware competition shifts to combined sensing capabilities from "point cloud" to "images” and from automotive to robots The "LiDAR (Automotive, Pan-Robotics, ...

Global and China Passenger Car T-Box Market Report, 2026

Based on 2025 market data and the latest business layouts of OEMs and suppliers from 2025 to 2026, this report analyzes the development status quo and future trends of China’s passenger car T-Box mark...

Global and China Range Extended Electric Vehicle (REEV) and Plug-in Hybrid Electric Vehicle (PHEV) Research Report, 2026

Research on REEVs and PHEVs: Foreign OEMs are considering extended-range technology as an important strategic option and will launch a series of new vehicles

Global PHEVs & REEVs tend to be domin...

Automotive Voice Industry Report, 2026

Automotive Voice Research: Explosive Growth in Features Like "See and Speak", 35-Fold Increase in External Voice Interaction in Two Years

ResearchInChina has released the Automotive Voice Industry R...

China Passenger Car Digital Chassis Research Report, 2026

Research on Digital Chassis: Leading OEMs Have Completed Configuration of Version 2.0 1. Leading OEMs Have Completed Configuration of Digital Chassis 2.0

By the degree of wired control of each c...